4 min to read

Introduction to Support Vector Machine (SVM)

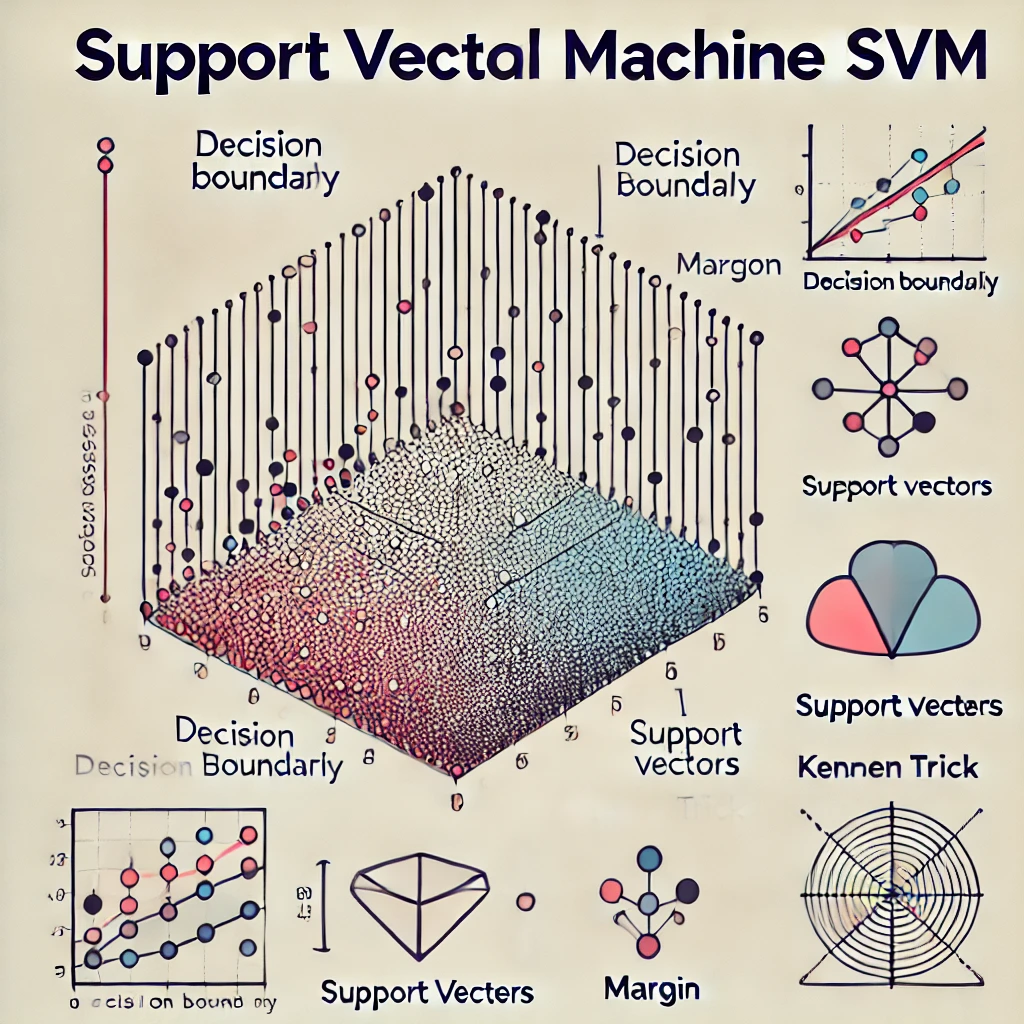

Support Vector Machine (SVM) is a powerful supervised machine learning algorithm used for classification and regression tasks. It’s renowned for its accuracy and efficiency, especially in high-dimensional spaces, making it a preferred choice for many data scientists. SVM operates on the principle of finding the best hyperplane that separates data points of different classes with the maximum margin, thus ensuring optimal classification.

This method has been applied successfully in various domains, including image recognition, text classification, and bioinformatics, demonstrating its versatility and robustness. Understanding SVM not only adds a vital tool to a data scientist’s arsenal but also opens up numerous possibilities for solving complex problems in innovative ways.

In the next sections, we will delve into the history of SVM, its technical workings, practical applications, and how to implement it using Python.

History and Evolution of SVM

The origins of Support Vector Machine (SVM) date back to the 1960s, with the development of the concept of a “support vector” by Vladimir Vapnik and Alexey Chervonenkis. However, it was not until the 1990s that SVM caught the attention of the broader research community, when Vapnik, along with Corinna Cortes, presented the modern formulation of SVM, which included the introduction of the kernel trick. This innovation allowed SVM to process data in high-dimensional spaces efficiently, making it significantly more powerful and versatile.

Over the years, SVM has evolved from a theoretical model of learning based on statistical learning theory to one of the most prominent algorithms in machine learning. Its ability to handle both linear and non-linear data, its robustness to overfitting, especially in high-dimensional space, and its applicability to a variety of classification and regression problems, have contributed to its widespread use and acclaim.

As SVM research progressed, numerous enhancements and extensions were developed, such as Support Vector Regression (SVR) and the use of different kernel functions to adapt the algorithm to specific types of data and problems, further cementing SVM’s status as a fundamental tool in the machine learning field.

Next, we will explore the core principles and workings of the SVM algorithm, providing a clearer understanding of its mechanism and capabilities.

Understanding the SVM Algorithm

At the heart of the Support Vector Machine (SVM) algorithm is the concept of finding the best hyperplane that separates the data points of different classes. A hyperplane is a decision boundary that divides the space of inputs in a way that minimizes classification errors. The best hyperplane is the one that has the maximum margin, which is the distance between the hyperplane and the nearest data point from either class, known as the support vector.

SVM can handle both linear and non-linear classification. In linear classification, SVM looks for a straight line (or hyperplane in higher dimensions) that separates the classes. In non-linear classification, SVM uses a technique known as the kernel trick to transform the input space into a higher-dimensional space where a linear separator is sufficient.

Key terms associated with SVM include:

Hyperplane: The decision boundary that separates different classes. Margin: The distance between the hyperplane and the nearest data point from either class. Support Vectors: Data points that are closest to the hyperplane and influence its position and orientation. Mathematical Foundation of SVM The mathematical foundation of SVM involves solving an optimization problem that seeks to maximize the margin between classes while minimizing classification errors. This is achieved using Lagrange multipliers, which help in transforming the problem into a dual problem where it becomes easier to apply the kernel trick for non-linear classification.

Applications of SVM

SVM’s versatility allows it to be applied in various fields such as:

Image Recognition: Identifying objects within images. Text Classification: Categorizing documents or emails into different topics. Bioinformatics: Classifying proteins and predicting gene functions.

Implementing SVM with a Sample Code Snippet

Using Python’s scikit-learn library, implementing SVM is straightforward. Here’s a simple example:

from sklearn import svm

# Prepare data

X = [[0, 0], [1, 1]]

y = [0, 1]

# Define the model

model = svm.SVC()

# Train the model

model.fit(X, y)

# Predict

print(model.predict([[2., 2.]]))

Challenges and Limitations of SVM

While SVM is powerful, it has limitations, such as:

Sensitivity to the choice of the kernel parameter. Poor performance on very large datasets due to its computational complexity. Difficulty in interpreting the final model, especially with non-linear kernels.

Conclusion

Support Vector Machine (SVM) remains a fundamental and widely used machine learning algorithm. Its ability to efficiently handle high-dimensional data and perform both linear and non-linear classification makes it a versatile tool. By understanding its principles, applications, and limitations, data scientists can leverage SVM to tackle a wide range of problems effectively.

Comments